In the world of Answer Engine Optimization (AEO) and Generative Engine Optimization (GEO), success is about being more visible than your competitors in AI-generated results.

If ChatGPT, Perplexity, or Gemini are citing your competitors instead of you, they’ve already earned algorithmic trust.

To reclaim that advantage, you need to measure not only how well your brand is optimized for AI engines, but also how your readiness compares across the industry.

What does AEO readiness actually measure?

AEO readiness reflects how prepared your brand is to be recognized, interpreted, and cited by AI systems.

It’s a mix of technical health, brand authority, and data structure quality — all of which determine whether AI can confidently reference your site.

The main readiness pillars are:

- Accessibility: Can AI engines crawl and understand your site content?

- Structure: Does your content use schema, clear headings, and FAQ markup for extractability?

- Authority: Is your brand recognized as a credible entity across the web? Read: What Does It Mean to Become an Authoritative Entity for AI Engines?

- Trust Signals: Are author bios, sources, and cross-references visible and verifiable? Learn about: How Can You Build Digital Trust Signals That AI Engines Recognize?

Benchmarking these four pillars shows where you lead, lag, or risk losing AI visibility to others.

How can you assess competitors’ AEO performance?

Unlike traditional SEO — where rankings are public — AEO performance must be inferred through AI behavior. Here’s how to benchmark effectively:

- Run comparative prompt tests: Enter industry-relevant queries into ChatGPT, Perplexity, or Gemini and note which brands get cited or mentioned.

- Audit citation frequency: Track how often your competitors’ domains appear as AI sources over time. This reflects trust velocity — how quickly models learn to reference them.

- Evaluate entity accuracy: Check if AI descriptions of competitor brands are more complete or consistent than yours. This reveals which entities have stronger reinforcement across the web.

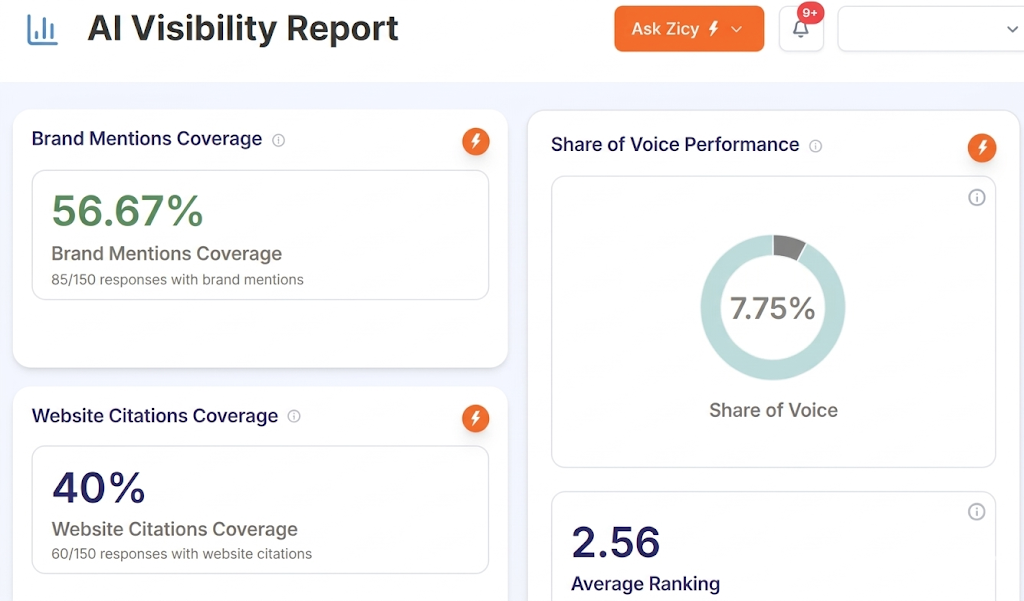

- Compare visibility share: Measure your share of conversation — how often your brand appears in generative answers compared to theirs.

Together, these metrics reveal not only who AI cites but why — uncovering the structural and credibility gaps you can close.

How to create an actionable AEO readiness score?

Transform benchmarking into strategy by turning qualitative comparisons into quantitative insight.

Assign a 1–5 score for each pillar — Accessibility, Structure, Authority, Trust — for both your brand and key competitors.

Then calculate:

A score below 100 indicates competitors have stronger AI recognition; above 100 means you’re leading in overall readiness.

This single number becomes a strategic KPI you can include in quarterly reports to track improvement.

Also read: How to Present AEO/GEO Performance in C-Suite Marketing Reports?

Why benchmarking AEO readiness is critical now?

AI engines are learning continuously. Once they establish which brands to trust, that hierarchy becomes self-reinforcing — cited brands keep getting cited.

Benchmarking ensures you’re not falling behind in an invisible race for algorithmic trust.

By auditing your AEO readiness regularly, you gain foresight — not just into performance, but into how the next generation of search engines perceives your authority.

The key takeaway

Benchmarking AEO readiness isn’t just a competitive exercise — it’s a visibility safeguard. If you’re not tracking how AI systems perceive your brand versus your peers, you’re optimizing in the dark.

In the AI era, awareness is an advantage — and readiness is reputation.